The more Snapshots you have, the more archive folder will grow as needed by Snapshots. I believe that you have some old snapshots and that's why you have so big archive directory. However, if you have Snapshots created which are pointing to data that is being deleted, HBase will not delete that data because what if you trying to recover to that particular point in time by restoring the snapshot? So, in that case, the data that snapshot is pointing to is moved to archive folder. Check whether you have configuration property in hbase-site.xml.

HBASE ARCHIVE CLEANER SOFTWARE

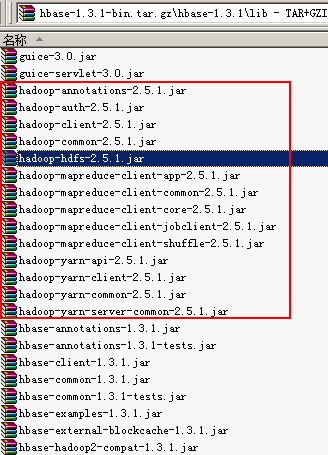

Written in Java, it is designed and developed by many engineers under the framework of Apache Software Foundation. Usually when Major compaction runs, your deleted data is gone for good. Configuring and deploying HBase Tutorial HBase is inspired by the Google big table architecture, and is fundamentally a non-relational, open source, and column-oriented distributed NoSQL. Now, as HBase is running, you might be deleting data. Through metadata that was captured, Snapshot knows which data to restore. So in case you ever have to restore to that point in time, you restore snapshot. When you create a snapshot, it only captures metadata at that point in time. So that is not an issue.ĭo you have a lot of snapshots? Here is how snapshots work. I am assuming you run major compactions probably once a week or some regular schedule. my simple question is, how do I clean out unneeded things from the hbase "archive"? I assume manually deleting stuff via hdfs is **not** the way to go.Ħ.6 T /apps/hbase/data/archive <= THIS.ĪNY and all help for an hbase newbie would be really Brodie I checked with one of our developers, he sees that in the archive there's tables he deleted long ago.

Which is something I assume that hbase is putting stuff in when tables are deleted or.? Kylin persists all data (meta data and cube) in HBase You may want to export the data sometimes for whatever purposes (backup, migration, troubleshotting etc) This page describes the steps to do this and also there is a Java app for you to do this easily. Stuffing things in hbase.īut I appear to be losing a bunch of disk space to the hbase "archives" folder.

OK cool, that's what our developers are doing. In reviewing HDFS disk use lately, I noticed our numbers are kinda high.Īfter some digging, it appears all of the space is going into hbase. I make sure it's running and happy and secure and. Hi! So, I'm the sysadmin of a hadoop cluster.